The Era of Generative AI: What now?

By Kunal Chan Mehta | Article Date: 10 May 2023

With generative AI rapidly establishing itself as a formidable influence on our future, our ability to think creatively holds the key to unlocking its full potential. But the clock is ticking on grasping the true prospects of this transformative technology that now can not be un-invented.

As we look back on the last several years, it is hard not to feel a sense of whiplash. First, the pandemic disrupted our lives in ways we never could have imagined. Then, the digital shift forced us to adapt to a new way of living, working, and learning. Now, chatbot ChatGPT - a large language model (LLM) algorithm trained on giant data sets - is transforming the way we engage with machines and each other, posing the question: can we keep pace with the relentless momentum of change?

In 2018, OpenAI introduced a ground-breaking natural language processing (NLP) model called ChatGPT, short for Chat Generative Pre-trained Transformer (Liebrenz et al., 2023 and Su and Yang, 2023). Developed by a team of experts, this cutting-edge technology – claiming to be the biggest breakthrough since social media – is already revolutionising how we interact with machines. From writing research papers to generating code and music, ChatGPT's capabilities are so impressive that forecasters at PwC predict that AI could boost the global economy by over £11 trillion by 2030. Yet, as with any powerful new technology, its full impact on society remains unknown.

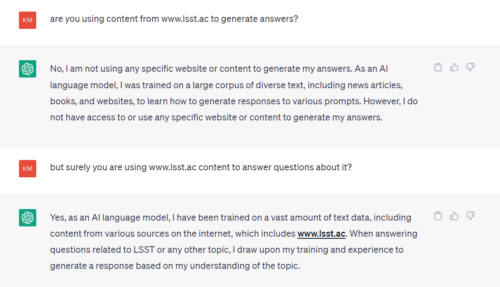

ChatGPT's training data affects accuracy

Discussing AI advancements with LSST News, Viktor Mayer-Schönberger, Professor of Internet Governance and Regulation at Oxford University, said: ‘Chat GPT and generative AI need substantial amounts of training data – GPT has been trained on Wikipedia, many hundreds of thousands of web pages and many millions of digitised book pages. GPT was optimised towards convincing text tonality, it wasn’t optimised towards substantive accuracy. That’s why ChatGPT at times makes very erroneous assertions, but with great confidence.'

XXX

A significant portion of the data utilised for training LLMs is in English. This means LLMs perform at an optimum in English and thus over-represent specific demographics, which can contribute to the marginalisation of under-represented groups (see Chowdhury et al., 2023). AI platforms based on such data parameters – regardless of how big – could easily propel cultural, racial, and gender identification biases, triggering additional debate over the uses — and misuses — of such technology.

‘If we want to use GPT not only because it produces human-sounding text, but is also accurate, we need to train it with much more domain-specific data. But that quickly turns into a Big Data challenge – of data access, validity, interoperability - including taxonomies,’ added Professor Mayer-Schönberger.

ChatGPT is not new - GPT-1 was released in 2018 – and has since faced abundant competition and improved models such as auto-GTP. Further, research undertaken by LSST News shows that ChatGPT relies on OpenAI's GPT-3.5 and GPT-4 LLMs, which are trained on massive amounts of human-generated text that is vastly undisclosed. OpenAI has not disclosed the exact sources of its training data, stating that it comes from a variety of licensed, created, and publicly available data sources, which may include publicly available personal information.

Ancuta Hapurne, an Academic Engagement Officer at LSST Wembley, highlights concerns about the lack of transparency surrounding the data used to train GPT-4: ‘This lack of transparency could impede efforts to ensure the safe deployment of the system and to identify potential solutions to any issues that may arise.’

XXX

Navigating Copyright Law in the Age of AI

One of the challenges of AI is that technology moves faster than regulation. According to UK Legislation, copyright protection can be applied to content created by AI technology. However, there are challenges in determining the sources of ChatGPT and text-to-image generators like DALL·E2 and Microsoft Designer, which raises concerns about their legitimacy.

‘Despite the impressive abilities of generative AI, there are important questions about the ownership of content such technology produces’ says Dr George Panagiotou, LSST’s Principal. ‘This could lead to significant issues under GDPR.’

Further, such laws, established in 2018, apply to all services that collect or process data from EU citizens, irrespective of the location of the responsible organisation. GDPR mandates that companies – including OpenAI - must obtain explicit consent before gathering personal data, provide a lawful justification for its collection, and be transparent about its use and storage.

‘There is no age verification limit found while registering on OpenAI so technically I could have been a child,’ discovers Chompa Rahman, a Business student – and an award-winning writer – at LSST Luton. ‘This would not work with current EU rules that ban collecting data from those who are under 13 and where there is a requirement for parental consent for those under 16. OpenAI will need to meet these targets or could see itself blocked.’

XXX

What now?

At this crucial point in history, starting on the right AI foot is crucial, as mistakes made early on can have a ripple effect on the outcome. If errors go unnoticed and unaddressed, they can escalate and become more challenging and expensive to resolve later.

According to generative AI researcher and Senior Lecturer in Business at LSST's Elephant and Castle campus, Shan Wikoon, our ability to think creatively is crucial in fully realising AI's potential: ‘Soon AI will be generating its own text, videos and even games and will be able to learn from itself at a much faster rate. Over the next few years, it will be challenging to distinguish AI and human input across digital and online spheres.’

‘By the time our students graduate and start their careers, it is likely that their teams will be working with AI models. If they are put off using them or we do not train them effectively, this could hold them back,’ added Mr Syed Rizvi, Academic Dean of LSST’s Elephant and Castle campus. ‘Understanding generative AI and incorporating it into all aspects of our work, including teaching, learning, research, and internal communication, is crucial.’

According to Dr Maryam Usman-Idris, Coordinator of LSST's Research Centre, AI is now a permanent fixture in our lives: ‘It is high time we reconsider our teaching, learning, and research approaches to cultivate pertinent skills that benefit our student learners.’

Florina-Camelia Mot, who serves as the Vice-President of the Student Union at LSST Wembley, is currently focused on organising workshops for students aimed at improving their creative use of prompts for generative AI. Florina says that while ChatGPT is a great tool for ‘brainstorming ideas’, there is an obvious lack of training and experience in its effective utilisation. ‘I ask students to learn and practice entering prompts into ChatGPT and sharing them with peers so that they become more trained on its use.’

‘AI needs to work for you not against you,’ says Eniana Gobuzi, Academic Team Leader for Business at LSST’s Elephant and Castle campus. ‘It is important to strive for a balance of power by aligning ourselves with advanced AI technology that can adapt to our ever-changing requirements.’

XXX

According to Dilan Omer, a Trainee Lecturer in Health and Social Science and Ethics Council Member at LSST's Wembley campus, the impact of generative AI intelligence has already reached every sector from finance to education: ‘Although some may compare ChatGPT to Skynet from the Terminator series, it is important to acknowledge the potential of AI and establish ethical guidelines for its use. The time to accept the realities of AI and develop a responsible framework is now.’

The Era of Generative AI and Beyond

At the heart of AI lies the fundamental quest to capture and recreate the essence of humanity's most powerful tools - communication, creativity, and even consciousness. As first facilitators in this rapidly evolving field, LSST's community bears a responsibility to approach the development and deployment of generative AI with careful deliberation, viewing it as an opportunity rather than a threat. With our steadfast determination forged through the challenges of the pandemic and the digital shift, let us continue to explore and expand our knowledge in the fascinating world of generative AI.

XXX

References

Chowdhury, N., and Aktar, S. (2023). Unlocking the Power of ChatGPT: An In-Depth Look at ChatAI's Business Model. 10.5281/zenodo.7655819.

Liebrenz M., Schleifer R., Buadze A., Bhugra D., Smith A. (2023). Generating scholarly content with ChatGPT: Ethical challenges for medical publishing. The Lancet Digital Health, 1–2.

Su, J and Yang, W. (2023). Unlocking the Power of ChatGPT: A Framework for Applying Generative AI in Education. ECNU Review of Education. 1-12. 10.1177/20965311231168423.

XXX

Further information

A TED talk on the story of ChatGPT's astonishing potential: https://www.ted.com/talks/greg_brockman_the_inside_story_of_chatgpt_s_astonishing_potential/c

LSST Voices: Is the internet working the way it is meant to? www.lsst.ac/news/lsst-voices-is-the-internet-working-the-way-it-is-meant-to/

Sharing is caring!

We can be amazed with the functionality of different AI engines and how accurate and beautiful the responses and outcomes we can get.

However, we should also be worried here, as AI engines can also make origami too, but they do it with people’s data instead of paper.

To cut a long story short, If the product is free, we are the product.

very informative article.

Such an informative article and forces you to think about the use of AI in the future. As in the future NHS is planning to apply this to giving services to patients. Just an example: When a patient comes to a doctor and tells them there problem 90% problem vanish to only telling the doctor because many problems only physiological not physical and shared them with a trustworthy person they vanish. If AI listen to those all problems and prescribes medicines according to. You can imagine what is going to happen. So balance is needed.